How Agentic AML ends the Compliance Blindspot with Contextual Intelligence

1 Oct 2025

Legacy AML screening built on static watchlists was designed for a slower era, one where risk evolved gradually, data was scarce, and human analysts could manually curate who mattered. In 2026, that model is no longer fit for purpose.

Watchlists are static snapshots: limited by human curation, narrow in scope, and blind to the reputational and contextual signals that now define real exposure. They flood institutions with false positives while missing hidden ownership, pre-enforcement controversies, and multilingual media indicators of risk. The result is a system optimised for control, not understanding.

After two decades of watchlist-driven compliance, the industry is trapped in an echo chamber: same lists, same logic, same blind spots. Breaking free requires solutions that understand context, not just match names.

Meanwhile, a new paradigm is emerging thanks to Agentic AML, where systems reason about context rather than match against lists. Instead of reproducing static judgments, they perform dynamic investigations, connecting evidence across registries, sanctions, leaks, and media to build transparent, explainable risk narratives.

VERA, CleverChain’s implementation of Agentic AML, exemplifies this shift. Acting as a digital investigator, it conducts autonomous yet auditable inquiries that interpret evidence, discard noise, and generate regulator-ready insights in minutes rather than hours.

With Agentic AML, institutions can expect:

Broader coverage, beyond sanctions and PEPs to registries, media, and reputational data;

Fewer false positives and fewer blind spots through contextual reasoning and entity resolution;

Explainable narratives with citations and ownership clarity, suitable for CDD, EDD, and audit;

Intelligence where no watchlist data exists, surfacing both risk and credible absence of risk;

Instant deployment and integration via web app, API, and MCP endpoints.

By automating not just detection but reasoning, Agentic AML transforms compliance from repetitive screening into a transparent, contextual, and risk-aligned investigative process — marking the industry’s shift from matching to understanding.

Why Watchlist‑based screening fails in 2025

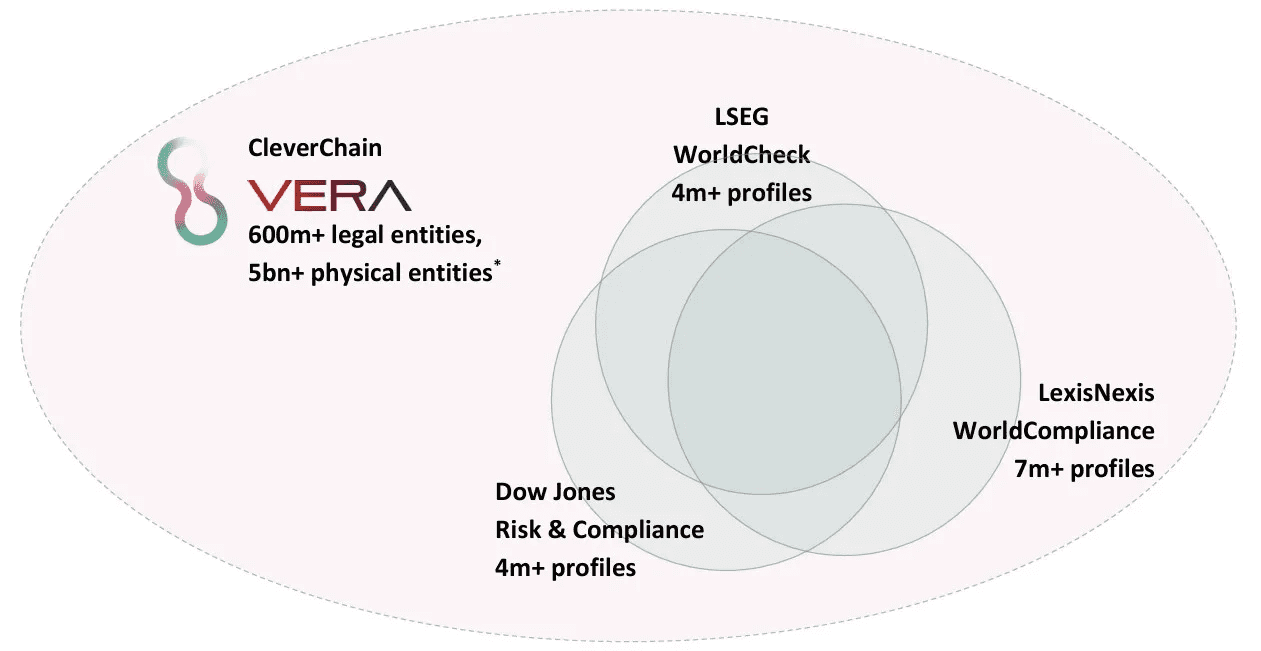

Global Data Coverage & Completeness*

* Note:

Estimates based on the ability of VERA to access real-time registry, commercial and open-source data, and gather intelligence on any entity globally. For legal entities, VERA captures a broader universe compared to the main commercial data providers. For physical entities, estimate is based on the universe of individuals with a digital footprint, e.g. social networks, mobile subscriptions.

* Sources:

Dun & Bradstreet - “About Us / Our Company”

LexisNexis Risk Solutions - “Bridger Insight® XG: Automate Compliance Screening”

Moody’s Analytics - “Orbis: global company‑reference data”

Dow Jones Risk & Compliance - “Business Intelligence / Risk”

LSEG Risk Intelligence - “World‑Check Data File”

International Telecommunication Union (ITU) - “Facts and Figures 2024: Internet use”

DataReportal - “Digital 2025: State of Social”

GSMA - “Mobile for Development: Mobile‑Policy Handbook / Mobile‑Internet Connectivity”

Watchlists were never designed to discover risk. They were built to summarise it, as static compilations of entities identified by analysts at a point in time. In an era when information moves globally and risk surfaces within hours, that logic no longer holds.

Today’s financial crime landscape evolves faster than the cycles of manual curation. Each list entry is the product of human judgment, lagging behind events, and bounded by capacity.

As a result, watchlists offer the illusion of completeness while omitting vast areas of exposure that have not yet been captured, translated, or formalized.

The limitations are structural, not technical:

1. Narrow and retrospective

Watchlists reflect what analysts once knew, not what risk looks like now. They are centred on sanctions and politically exposed persons while missing hidden ownership, newly incorporated fronts, and fast-moving reputational risks that emerge long before enforcement.

2. Exclusion of positive signals

Because lists accumulate only negative events, they cannot account for acquittals, clean-ups, or restored credibility. This makes risk assessments one-sided and self-reinforcing.

3. Blind to reputational context and language

Reputational and ESG-related exposures are now material to AML and compliance outcomes. Yet most lists remain monolingual and narrow: an investigative report in Arabic or Chinese that reshapes perception in-market may never reach an English-language dataset.

4. False‑positive engine

Name-matching without reasoning produces alerts at industrial scale. Without corroborating context such as geography, role, or chronology, every common name becomes a potential escalation, draining resources that should target actual risk.

5. The false-negative bubble

The same few data providers curate nearly identical datasets of roughly 4–6 million entities. This shared reference point acts as an echo chamber: a global compliance industry screening against the same narrow universe and collectively missing the rest.

In short, watchlists capture what was once risky, not what is. They overlook reputational signals that often precede enforcement, and they can hardly adjust as narratives evolve. The future of AML lies in continuous, contextual investigation, where reasoning replaces curation and systems identify risk as it emerges, not after it is recorded.

Quantifying the pain

Across the industry, false-positive rates in watchlist screening routinely exceed an estimated 90%. Millions of alerts are generated each month, but the vast majority are irrelevant. Meanwhile, entire compliance teams operate around disproving noise rather than uncovering risk. The result is a paradox: compliance operations grow, but assurance does not.

Within this context, the pain from watchlist screening can be quantified in terms of alert quality, cost, capacity, unknown risks and operational fatigue:

1. Alert quality

List-based screening produces an extremely high volume of false-positives because names are evaluated out of context. Shared surnames, transliteration differences, or missing identifiers can trigger thousands of spurious hits. Every one of them consumes analyst time, quality assurance reviews, and management oversight, all for an event that does not represent real exposure.

2. Cost and capacity

As alert volumes increase, institutions add headcount instead of accuracy.

Each analyst’s marginal productivity falls as time is spent triaging irrelevant hits. Budgets expand, but the quality of risk assessment stagnates. In some institutions, alert review consumes more than half of total financial crime operating costs.

3. Risk of blind spots

While teams chase false positives, true positives slip through – cases where genuine risk exists outside the lists being screened. These may include hidden ownership links, credible adverse-media exposures, or reputational controversies that never make it into a watchlist.

4. Operational fatigue

The monotony of resolving low-value alerts drives burnout and turnover across compliance teams, eroding institutional knowledge and increasing error rates. With performance often measured on speed rather than insight, analysts begin reviews assuming alerts are false positives. This conditioning reinforces confirmation bias and raises the risk that genuine red flags are dismissed as noise.

What regulators expect now

Regulators across the globe have raised the bar for financial-crime compliance. Supervision is shifting from procedural box-ticking to evidence-based understanding of risk. Institutions are now assessed on how they identify, interpret, and document exposure, not merely on whether they screened a name.

1. Risk‑based, contextual decisions

Controls must scale with risk and incorporate relevant data sources, not rely solely on static watchlists. Supervisors expect firms to demonstrate how context, such as geography, ownership, and behavioral patterns – informed each decision.

2. Ongoing monitoring and adverse media coverage

Periodic checks are no longer sufficient. Regulators expect continuous or event-driven monitoring that captures new risk events and credible negative news in real time, supported by consistent, explainable processes.

3. Beneficial ownership and control clarity

Firms must identify and understand who ultimately controls counterparties, beyond legal ownership. The expectation extends to indirect relationships, complex shareholding, and third-party networks that reveal hidden influence or exposure.

4. Explainability and auditability

Every decision — particularly in enhanced due diligence (EDD) — must be traceable and evidenced with sources, timelines, and reasoning that auditors and regulators can follow. This requirement applies equally to human and AI-supported analysis.

The message is clear: regulators now expect AML controls to think contextually, act continuously, and explain transparently. Therefore, traditional watchlist screening cannot meet those standards on its own.

From static checks to Agentic AML

Agentic AML marks a fundamental shift in how financial institutions identify and assess risk: where legacy systems apply static rules and binary matches, agentic systems use reasoning to interpret evidence in real time. They don’t simply check names, they investigate context, relationships, and ownership to reach explainable conclusions.

Rather than operating as passive filters, agentic systems behave like digital analysts: they gather information across registries, sanctions, leaks, and multilingual media, testing hypotheses until a coherent picture of risk emerges. Each step is logged, cited, and auditable.

The change is profound:

From matches to meaning — resolving real-world entities, relationships, and ownership rather than strings of text.

From alerts to narratives — producing investigator-grade reports with sources, timelines, and reasoning that withstand regulatory scrutiny.

From rigid rules to reasoning — combining retrieval, logic, and evidence weighting to discount irrelevant hits and elevate credible risks.

Agentic AML transforms screening from a static control into an active investigation. It delivers consistency, explainability, and depth of understanding that traditional list-based approaches were never designed to achieve.

Five reasons VERA’s agentic approach changes compliance forever

VERA’s agentic approach is transformative:

1. Autonomous investigations, not just name matching

VERA operates as a digital investigator: autonomous yet explainable. It collects and cross-references data from corporate registries, sanctions lists, leak databases, and multilingual media to connect the dots across people, companies, and jurisdictions. The system forms and tests hypotheses, presenting its reasoning transparently for human review.

2. Contextual reasoning slashes both false positives and false negatives

By weighing corroborating identifiers, such as dates of birth, roles, locations, and network relationships, VERA filters out irrelevant hits and captures hidden ones. Contextual reasoning transforms static screening into precision risk detection, allowing teams to focus effort where exposure is real.

3. Human‑readable, regulator‑ready narratives

Each investigation produces an evidence-based narrative: a concise report with citations, timelines, and ownership maps. These reports explain not just what was found but why it matters, aligning seamlessly with audit and EDD documentation standards.

4. Real-time Intelligence beyond the stale watchlist universe

Most entities of interest never appear on any formal list. VERA builds intelligence profiles even where no watchlist data exists, analysing registries and local-language media to surface emerging or reputational risk – or confirm credible absence of it.

5. Configurable based on each institution’s own policy and risk appetite

Thresholds, data sources, and reasoning logic adapt to an organisation’s own frameworks, jurisdictions, and products. Compliance teams can tune the system for higher sensitivity in onboarding or higher precision in periodic reviews, maintaining full policy alignment.

Collectively, these capabilities turn compliance from a reactive control into a proactive intelligence function, combining automation, transparency, and contextual depth to achieve risk coverage that legacy screening cannot match.

How VERA works (at a glance)

VERA conducts end-to-end investigations as an autonomous but explainable loop, continuously gathering evidence, testing hypotheses, and refining conclusions until it reaches a defensible, auditable outcome.

– Entity discovery and resolution

VERA identifies and reconciles entities across registries, sanctions data, leaks, and public sources. It corroborates names with supporting attributes an context – such as dates of birth, addresses, corporate IDs, and roles – to resolve identities across languages and transliterations.

– Relationship, ownership and control analysis

The system analyses corporate hierarchies to reveal directors, shareholders, and ultimate beneficial owners (UBOs), including indirect or circular control structures. Network reasoning exposes hidden links between counterparties that are invisible to list-based screening and legacy commercial databases.

– Contextual screening

Each sanction, PEP, or adverse-media signal is re-evaluated in context — geography, sector, role, and chronology. This allows irrelevant lookalikes to be discounted and genuine risks to be prioritised.

– Iterative Reasoning and Hypothesis Testing

Rather than applying static rules, VERA asks investigative questions: What evidence supports this risk? What would disprove it? It retrieves targeted data to confirm or reject each hypothesis, converging on a clear conclusion.

– Narrative reporting and evidence logging

Every step – from data retrieval to conclusion – is logged and timestamped. VERA generates concise, cited reports with ownership maps, timelines, and summaries suitable for CDD, EDD, and audit documentation.

– Human‑in‑the‑loop governance

Analysts can accept/override, add context, and feedback outcomes—continually deepening the investigation, improving performance and aligning with policy.

The overall result: investigations that are faster, deeper, and fully explainable, combining the diligence of a human analyst with the reach and consistency of automation.

Measurable outcomes

Early deployments of Agentic AML systems demonstrate significant measurable improvements in both detection accuracy and operational efficiency. By reasoning across diverse data sources and documenting every step, VERA delivers outcomes that can be quantified, audited, and sustained at scale.

Precision: Contextual discounting and robust entity resolution materially reduce false positives by well over 95%, freeing analysts to focus on genuinely high-risk cases.

Breadth: Fewer false negatives as hidden ownership, local‑language media, and leaks are incorporated.

Speed: Faster time‑to‑decision from automated investigations and ready‑to‑file narratives.

Consistency: Policy‑aligned logic, reproducible outcomes across teams and regions.

Auditability: Every decision is traceable, source‑linked, time‑stamped reports meeting internal audit and regulators expectations.

Productivity: By eliminating repetitive false-alert handling, analysts can redirect capacity toward strategic investigations, thematic reviews, and emerging-risk analysis — shifting compliance from cost center to an intelligence function.

Together, these gains show that reasoning-based AML is not just conceptually stronger but operationally superior, producing higher accuracy, lower cost, and clearer accountability across the entire compliance lifecycle.

Implementation and Governance

Agentic AML is built with governance at its core. Every investigation, configuration, and decision is transparent, controllable, and auditable, ensuring automation enhances oversight rather than obscuring it.

Implementation builds on the following cornerstones:

Deployment

Institutions can activate VERA instantly through a secure web app interface — no installation or infrastructure changes required. For deeper integration, APIs and MCP endpoints connect directly to existing case managers, screening platforms, or internal workflows. Deployment can begin with a single user group and scale organisation-wide within days.

Policy fit and calibration

Configure thresholds by product, customer segment, and jurisdiction. Calibrate to be conservative in onboarding and more dynamic in periodic reviews.

Controls & Quality Assurance

Every reasoning step and decision output is logged for full traceability. Supervisors can sample investigations, review underlying evidence, and simulate scenarios to test sensitivity and bias.

Data protection and access management

VERA adheres to strict privacy and security standards. Data minimisation, encryption in transit and at rest, and granular role-based access controls ensure only authorised users can view or act on information. Audit trails record all activity for compliance with GDPR and equivalent frameworks.

Governance outcome

These safeguards mean institutions can quickly deploy advanced reasoning systems while maintaining regulatory confidence. Decision logs, QA sampling, and continuous feedback loops provide the evidence base for both internal audit and external supervisory review.

With explainability, security, and control embedded by design, Agentic AML is not only faster: it is safer, more accountable, and more sustainable than traditional list-based compliance.

A comparative overview

Table 1 – Watchlist-only screening vs VERA, by capability

Capability | Watchlist-only screening | VERA’s Agentic Due Diligence / AML |

Coverage | Sanctions/PEP lists/ FinCrime Adverse Media | Registries + sanctions + leaks + global/local media + reputational signals |

Entity resolution | Basic fuzzy name matching | Multi-attribute resolution (DOB, roles, geos) + network context |

Positive information | Not captured | Captured and reflected in residual risk |

Reputation risk | Minimal | Systematic multilingual adverse-media assessment |

Output | Hit/no-hit, short notes | Narrative reports with context, citations, timelines, ownership maps |

False positives | High by design | Actively discounted with context |

False negatives | Frequent (missed context) | Reduced via broader sources and reasoning |

Auditability | Limited | Full logs and regulator-ready |

Traditional screening delivers control without understanding – repeating the same static data across the industry. Agentic AML transforms this into a living process: one that reasons, explains, and adapts, providing risk visibility and regulatory assurance in a single workflow.

Conclusion: from “name screening” to contextual AML with actionable intelligence

By automating not just matching but thinking, VERA turns fragmented, manual workflows into a cohesive, explainable, and risk‑aligned process. That means fewer false alerts, broader coverage, and decisions regulators and executives can trust.

After two decades of rule-based screening, the financial-crime industry has reached a turning point: the limitations of static watchlists, such as narrow coverage, lagging updates, and high false-alert rates, are now structural, not operational. Regulators expect contextual understanding, continuous monitoring, and explainable decisions.

These goals cannot be met by list matching alone and agentic AML replaces static checks with dynamic reasoning. Instead of asking “Does this name appear on a list?”, it asks “What does the evidence tell us about this entity, its connections, and its credibility?”. The result is not an alert, but a defensible narrative and an investigative record built on explainable intelligence.

VERA embodies this transformation. By automating not just detection but reasoning, it turns fragmented workflows into a cohesive process that surfaces real risk, explains its logic, and stands up to audit.

The shift from matching to understanding marks the next chapter of compliance — one where automation thinks contextually, investigators act strategically, and institutions finally achieve both efficiency and insight.

Annex

Table 2 – Comparative overview of AML Screenings tools in the market

Theme | LSEG | Dow Jones | LexisNexis |  | |

Data Coverage & Completeness / High risks[1] | Medium –Curated list of 4m+ profiles across 30 risk categories[2]. However, depending on analysts’ capacity and excluding relevant risk categories (e.g. reputational risk) | Medium – Curated list of 4m+ profiles [3]. However, depending on analysts’ capacity and excluding relevant risk categories (e.g. reputational risk) | Coverage/ Medium – 45,000 news resources in 200 countries; 7m+ profiles[4] Completeness/Low – Not designed for AML screening, focus on news and media sources | Strong – widest coverage by default, but unstructured nature, no entity resolution mechanism and no profile standardisation make it virtually impossible to automate and manage at scale | Strongest – Combines the depth of both watchlists and open source, applies contextual automation to find financial crime and reputational risks, automatically removing the noise and capturing false negatives |

Network Intelligence | N/A – Not a core feature | N/A – Not a core feature | N/A – Not a core feature | Low – Not a core feature, but may yield results, although unstructured, not univocal and time-intense | Strongest – Ability to trace relationships of physical and legal entities in a network of third parties, e.g. as director, UBO, or shareholder |

Governance Transparency and Auditability[5] | Moderate – Systematic evidence (e.g. links), however no audit trail on the steps and logic of analysis | Moderate – Systematic evidence (e.g. links), however no audit trail on the steps and logic of analysis | Moderate – Systematic evidence (e.g. links), however no audit trail on the steps and logic of analysis | N/A – Out of scope | Strongest – Time-stamped reports including evidentiary reasoning and documentation for each investigative step |

Real-time Capabilities | N/A – Follows review cycles | N/A – Follows review cycles | Strong – News focus, although no entity resolution mechanism | Strong – News focus, although no entity resolution mechanism | Strongest – Real-time intelligence enhanced by entity resolution and contextual analysis |

Multi-language capability | Low/Moderate – Cannot search for names in local language e.g. Arabic, Cyrillic, Chinese | Low/Moderate – Cannot search for names in local language e.g. Arabic, Cyrillic, Chinese | Strong – 37 languages available[6] | Strong – Has native multi-language capability, although output is unstructured and in foreign language too | Strongest – can combine searches in any language while keeping the output in the desired language and relying on powerful fuzzy logic |

Interpretation Capability | Low/Moderate – Historically high share of false positive also considering lack of systematic data collection | Moderate – e.g. no scenario-based discounting, removal of circular ownerships, ability to go beyond local registries | Low – Significant manual effort to discount false positives is required | Low – Not a core feature, but may yield results, although unstructured, not univocal and time-intense | Strongest – Customisable scenario-based and contextual discounting methodology for screening; multi-layer UBO analysis beyond local registries |

Process Automation & Standardisation | None – stand-alone tool requiring manual intervention, heterogeneous output | None – stand-alone tool requiring manual intervention, heterogeneous output | None – stand-alone tool requiring manual intervention, heterogeneous output | None – stand-alone tool requiring manual intervention, heterogeneous output | Strongest – Natively end-to-end, custom workflow unifying all due diligence sources and steps into a standardized output (content and format) |

Qaulity Assurance | N/A – Out of scope | N/A – Out of scope | N/A – Out of scope | N/A – Out of scope | Strongest – Native feature for auditability and escalation purposes |

Processing speed | Strong – But limited to the specific domain, requires highly manual intervention | Strong – But limited to the specific domain, requires highly manual intervention | Strong – But limited to the specific domain, requires highly manual intervention | Strong – But requires the highest manual intervention | Strongest – As it covers the whole due diligence process holistically |

Customisation / Calibration vs | None/Low | None/Low | None/Low | N/A | Strongest – Ability to customise protocol, format, data blocks, design new sections, etc. |

Escalation & Support | Moderate – Standard helpline and escalation process | Moderate – Standard helpline and escalation process | Moderate – Standard helpline and escalation process | N/A | Strongest – Leverage in-house expertise combined with preferential access to data providers |

* Notes:

[1] Relevant Data Points including e.g. Client Information and High Risks (e.g. Sanctions, Adverse Media, Enforcement, PEP) – i.e. ability to capture required data fields for regulatory and risk assessment purposes. Theme Focus: How comprehensively the tool captures required data fields for regulatory and risk assessment purposes.

[2] Source: https://www.lseg.com/content/dam/risk-intelligence/en_us/documents/fact-sheets/world-check-data-file.pdf

[3] Source: https://www.dowjones.com/business-intelligence/risk/products/financial-crime/

[4] Sources: https://risk.lexisnexis.com/global/en/products/bridger-insight-xg-global

[5] Focus on how effectively the tool surfaces governance red flags such as high risks, nominee directors, cross-entity linkages, or known high-risk individuals.

[6] Source: https://www.lexisnexis.com/en-gb/products/research-insights/nexis